by Philip Merrill

Devices for media consumption are designed with varying equipment on board to perform tasks such as information storage and processing. Stationary game consoles or desktop towers are easier to load up with processing support than portable handhelds. Limits on what is practical for portable batteries add energy storage to the on-board roster and the compromises required during design. Network Based Media Processing moves processing into the cloud, or other network(s), offering designers a new set of choices for how to present media types as well as for processing common formats with high efficiency. In addition to reducing the processing demands placed on the device, remote processing also moves these clock cycles and the electricity they require to the cloud and its implied electrical wall sockets.

As for new media types or uses, loosening processing constraints supports anywhere-computing by making it easier to replicate desktop performance on a mobile phone. Support for videogames and 3D environments is one of many possible use cases including live sports events with crowdsourced uploads, live. Worldwide, heavy computing problems are already being solved by distributed big data processing, such as citizen-science support for crunching data generated by physics and astronomy.

We are more accustomed to having our data in the cloud than we are to letting the cloud process it. But already, in this world, our lifestyles are changing thanks to distributed-processing support. MPEG standardization provides a means to accelerate adoption and explore the new goals in sight as well boost general efficiency.

NBMP allows shared collations of data to be built, shared and even crowd-updated as abstract entities, in stored memory, all able to accommodate efficiency. In addition to enabling improved media distribution, the many use cases that can be supported by such datacloud-nodes will generate unexpected applications, such as in AR/MR. For example, live uploads can be cross-referenced for relevance or interaction or creating multiangle video stories, in near realtime. Such configurations can also be put to work for adaptive bit rate multi-casting, illustrating their useful range.

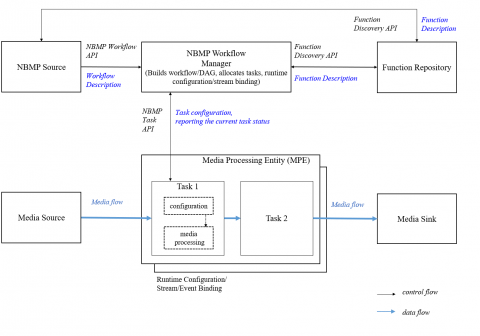

The trick is this combined processing and power advantage, a clearly desirable feature. The impediment has been the usual variety which could be coped with by means of a table, which in turn needs updating. An alternative to these fragmented solutions is our MPEG NBMP standard developed to improve two-way and multi-party communication. This provides a reliable processing-in-the-cloud interface for AR, for example, which expects a sedentary back end such as a server bank, better able to handle processing and accompanying power demands. It is not that handheld devices should become mobile dumb-terminals. Rather the goal is to leverage their existing sophistication in the unit and transmit an upgraded set of experiences, such as AR/MR. VR headsets can also benefit and no doubt new categories of device will be imagined and made, with suitable processing and power support.

April 2020