By Philip Merrill - May 2016

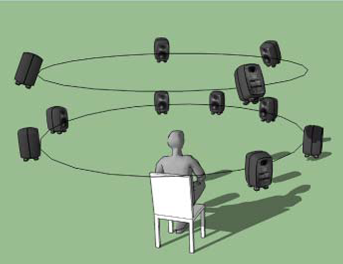

The MPEG-H standard was conceived as a complete Systems-Video-Audio standard in the wake of MPEG-1, MPEG-2 and MPEG-4. The audio part of the standard, called MPEG-H 3D Audio, targets the efficient transmission of a complete 3D audio space in the studio to the listener, overcoming the naturally arising differences in the arrangement between the number and dispositions of microphones, capturing sound sources, and loudspeakers recreating house sound sources. MPEG-H 3D Audio does this efficiently because it can transmit a full-fledged 22.2 speaker configuration with as little as 1024, 512 and even 256 kbit/s.

The 22.2 configuration improves on 7.1 including speakers above and below ear level, and its spatial logic guides downmixes to the listener's unique equipment selection, converting whatever formats are needed for rendering sound at the loudspeakers. These may include various standard immersive sound configurations, arbitrarily non-standard ones as well, speaker systems that are either wireless or mounted on devices, and headphone cables. Random access for break-in and trick modes is always supported. The metadata alongside the audio signal can support adaptations and interactions to optimize delivery in the home environment, including correction for misplaced speakers, and even calculation of unique Head Related Transfer Functions (the response that characterizes how an ear receives a sound from a point in space) so headphone channels are customized for the unique head of a listener.

Objects change directions in the spatial sound scene. The incoming direction of audio adjusts to parameters that convey the size and relative location of the object. This can be extremely granular along the vertical dimension. For game interactivity, directional audio rendering is a necessity and simulating a point of origin is also a common element in movie special effects. The spatial model from the parameters is matched to the 22.2 layout preserving consistent immersive rendering across 3D Audio's wide range of formats.

Another world of directional information is conveyed by the option of source content with Higher-Order Ambisonics (a high spatial resolution full-sphere surround sound technique). HOA has its own spatial modeling format. In a manner similar to object parameters, this model is set onto the 22.2 grid, so to speak, and then contents are adjusted to their proper places of origin in the mixer. In addition to drums, orchestra, and water, test material for HOA included stadium and shouting audience sounds, as well as a helicopter and a synthesized bee.

Sporting event broadcasts are often noteworthy for their rich combination of audio sources, commonly including live court action, audience noise, and expert commentary. Depending on the point of view in a shot, such as in the broadcast booth versus on the field, these several sources as well as their directionality can be modified on the fly to suit the scene. This scenario also calls attention to the potential differences between live broadcast material and prerecorded content for which more time spent encoding is an option to achieve higher quality at the same or better bitrate.

At its 110th meeting, October 2014 in Strasbourg, MPEG published the Report on MPEG-H 3D Audio Performance, finding consistently excellent results in listening tests (over 80% MUSHRA) across the 384 kb/s to 1.2 Mb/s bit range for several configurations of objects, channels, and HOA, as well as on headphones. While this fulfilled initial goals for high quality, MPEG could not be expected to settle for good at lower bitrates. New work has beed carried out, focusing on bitrates from 48 kb/s to 128 kb/s. Aside from the scenario where all sound sources must be delivered on such a reduced bit budget, others cases exist where live voice communication requires low latency without much need for high quality, notably between team members during game play.

3D Audio's range of options leverages its standardized open framework to deliver different immersive audio experiences, suited to various needs. Besides the home theater, personal tablet, and smartphone platforms, audio uses also vary in style, from corporate videoconferences to karaoke and in multiple languages. Another main application scenario was audio-only listening. In the real world this now includes combinations of personal data, purchased digital media, possibly multiple streaming music sources, and information tools for investigating the people and facts behind the music or creating playlists, not to mention social media. Creating a modern multi-channel audio experience for appreciation of the classics can now be expected to require a symphony of applications and services to work well together. What is true for quiet listening must also be flexibly suitable for pumping up the home gym, and both of these need to adjust for different users' unique personalizations. In addition to providing technology suitable for such uses, 3D Audio's open nature and ongoing process help ensure a common development environment.

Engagement with developing technologies is often multifaceted with major-brand teams, start-ups and home coders sharing creative and technical interests. New directions emerge within this sea of interests and 3D Audio's common framework is extensible, able to address new needs partly thanks to its original requirements. While virtual reality is not yet a fully developed area in 3D Audio, interacting by changing position in a virtual concert hall was among its original scenarios, as was 3D Video and its changing viewpoints. Although augmented reality is not presently tied in, MPEG is working on AR independently and such uses are generally compatible with 3D Audio over the long-term thanks to extensions and leveraging the power of metadata. The hope and expectation with open standards is that the framework-technology becomes transparent, enabling tool makers and users to better focus on creative work they care about.

Directional sound is expected to keep increasing the intensity of peak experiences for its audience, ranging from fictional explosions to romantic music environments. Meanwhile consumer expectations for visual and audio display are wider and more intense than ever, with or without synchronized video and whether on headphones or generated by 3D speaker arrays. It has been in the nature of video entertainment that accompanying sound generates a greater vividness of experience and a sense of emotional presence or reality. No matter how hyperreal video content becomes, the creation of immersive surround sound will be expected to keep up. The creative community seems to be in a surge of 3D creation, and as this body of work grows, having a manageable and uniform standard for delivery should increase in both value and side-benefits. Part of the purpose for developing a digital standard for media delivery is to gain the advantage of interoperable flexibility. For MPEG-H in general and 3D Audio in particular, the advantages are many and already growing. Over time, new options for using 3D Audio can be expected to arrive from fresh directions.

Go to the MPEG news page